In the context of SSL/TLS:

-

A KeyStore is a secure collection of identity certificates and private keys. By default, the KeyStore is in Java KeyStore (.jks) format.

-

CN represents the common name of the server protected by the SSL certificate.

-

FQDN represents the most complete domain name that identifies a host or server.

Hevo requires the CA file, the client certificate, and the client key to configure Apache Kafka as a Source if SSL encryption is enabled in Hevo. To generate these files, log in to the Kafka server folder where the Kafka TrustStore and KeyStore are located and perform the following steps:

1. Generate Client Certificate and Client Key

-

Enter the following command on the Kafka server command line:

keytool -keystore {key_store_name}.jks -alias {key_store_name_alias} -keyalg RSA -validity {validity} -genkey

Note: The alias is just a shorter name for the key store. The same alias needs to be reused throughout the steps.

You need to remember the passwords for each KeyStore or TrustStore to use later.

-

Provide the answers to questions that are displayed on the interactive prompt.

For the question What is your first and last name?, enter the CN for your certificate.

Note: The Common Name (CN) must match the fully qualified domain name (FQDN) of the server to ensure that Hevo connects to the correct server. Refer to this page to find the FQDN based on the type of the server.

2. Create your Certificate Authority

Note: This step is optional if you already have a CA to sign the certificates.

A Certificate Authority (CA) is responsible for signing certificates for each server in the cluster to prevent unauthorized access.

Run the following command on the Kafka server command line to create your own certificate authority:

openssl req -new -x509 -keyout ca-key -out ca-cert -days {validity}

3. Add the Certificate Authority to a TrustStore

A TrustStore is a secure collection of CA certificates that the broker can trust.

Run the following command on the Kafka server command line to add the CA to the broker’s truststore:

keytool -keystore {broker_trust_store}.jks -alias CARoot -importcert -file ca-cert

4. Sign the certificate with the CA file

Enter the following command on the Kafka server command line to sign the certificates with the CA file:

-

Export the certificate from the KeyStore:

keytool -keystore {key_store_name}.jks -alias {key_store_name_alias} -certreq -file cert-file

-

Sign the certificate with the CA:

openssl x509 -req -CA ca-cert -CAkey ca-key -in cert-file -out cert-signed -days {validity} -CAcreateserial -passin pass:{ca-password}

-

Add the certificates back to the keystore:

keytool -keystore {key_store_name}.jks -alias CARoot -importcert -file ca-cert

keytool -keystore {key_store_name}.jks -alias {key_store_name_alias} -importcert -file cert-signed

Before extracting the client certificate key from the KeyStore, you must convert the KeyStore file from its existing .jks format to the PKCS12 (.p12) format for interoperability.

Enter the following command in the Kafka server command line:

-

Convert the keystore from .jks format to .p12 format:

keytool -v -importkeystore -srckeystore {key_store_name}.jks -srcalias {key_store_name_alias} -destkeystore {key_store_name}.p12 -deststoretype PKCS12

-

Extract the client certificate key into a .pem file.

openssl pkcs12 -in {key_store_name}.p12 -nocerts -nodes > cert-key.pem

This is the format in which Hevo understands the certificate keys.

You can now find the following files in the Kafka server folder where the Kafka TrustStore and KeyStore are located and upload them to Hevo to configure Apache Kafka as a Source.

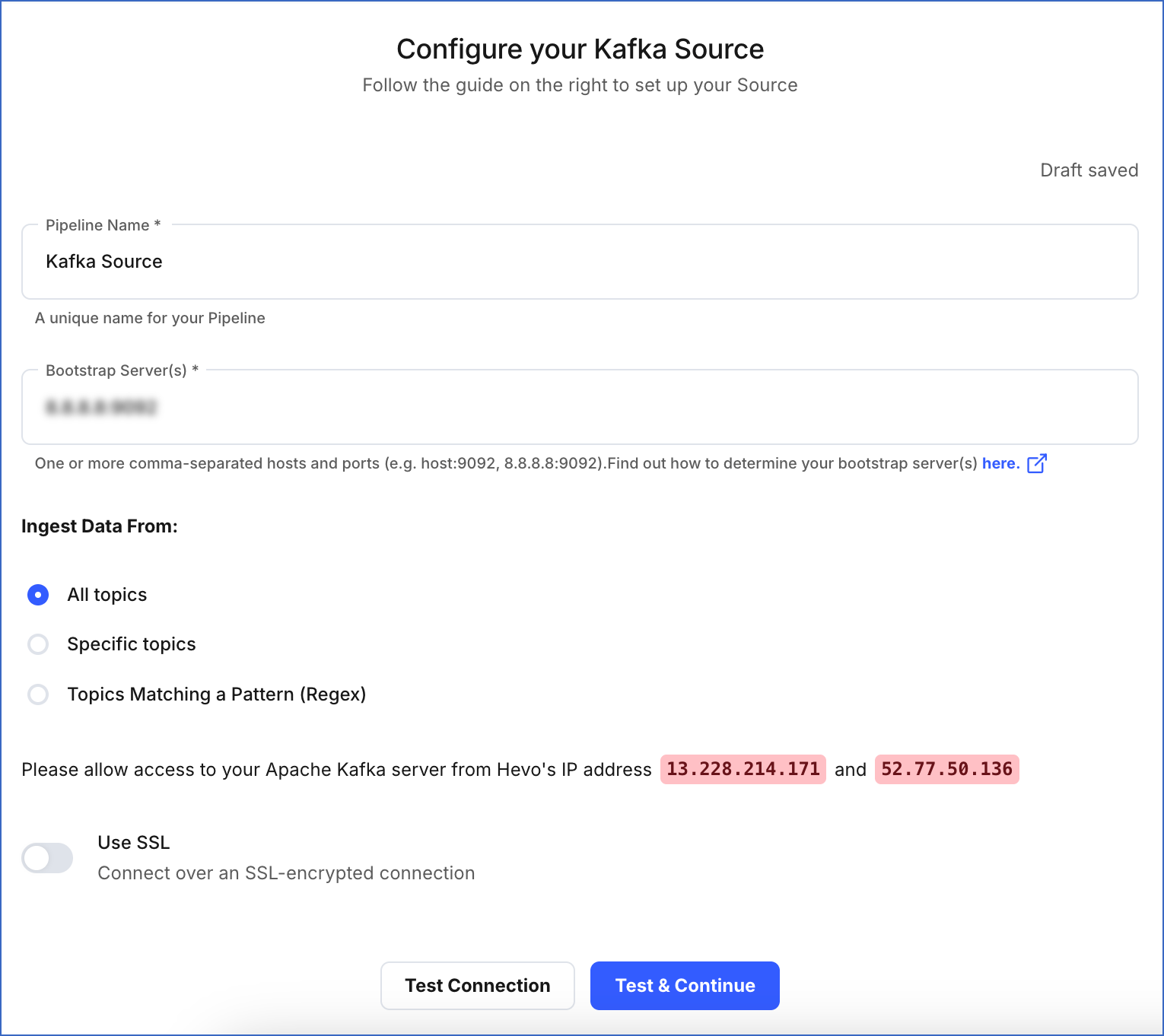

Perform the following steps to configure Apache Kafka as the Source in your Pipeline: